As a user enters virtual space and the physical world falls away, immersive technology takes on a responsibility to build trust with the user while still providing a compelling experience.

As UX designers, let’s face it—we’ve gotten comfortable designing for flat screens. We know the patterns that work and the affordances that will make an experience intuitive. We know how to design for diverse devices and platforms and their variable pixel densities. We know how to collaborate closely with engineering teams to bring an experience to market.

However, designing for dimension, though as natural as the world around us, is not that simple. Perhaps virtual reality captures our attention simply because it delivers a user experience we’ve always anticipated from the interface. Rather than interacting with computing on its terms through two-dimensional terminals, we’re able to engage with the world spatially, naturally, directly.

We need to avoid the common problem seen with every emergent platform—simply porting over UX paradigms we know have always worked. When it comes to virtual experiences, shall we force our user to engage with the world through virtualized flat panels? Are we designing something that feels native to the platform?

Such port-over paradigms are already prevalent in virtual experiences popping up in Google Cardboard, HTC Vive, and Oculus Rift.

As a user enters virtual space and the physical world falls away, immersive technology takes on a responsibility to build trust with the user while still providing a compelling experience. This complicated relationship is pushing designers to think more broadly about the communication between the user and the virtual environment. Content creators are reaching to architecture, music composition, medicine, fiction and human instincts to build a new vocabulary to communicate with users and provoke new models of interaction.

Through our Immersive Spaces practice, we explore seamless spatial interactions with physical and virtual environments and objects. We focus on 360º interactions and the new paradigms required for creating true immersion and deep sensory experiences. Through project work, our own consumer research and lab experimentation, we have established key perspectives and insights that can improve and make future virtual reality experiences better.

In this article, we will share our framework for mapping VR experiences and outline the design layers to consider when designing virtual reality experiences. While there is much more to be defined in a rapidly evolving space, we have found these to be immediately helpful in ensuring that emerging immersive experiences are both compelling and novel.

Framing the Design Process

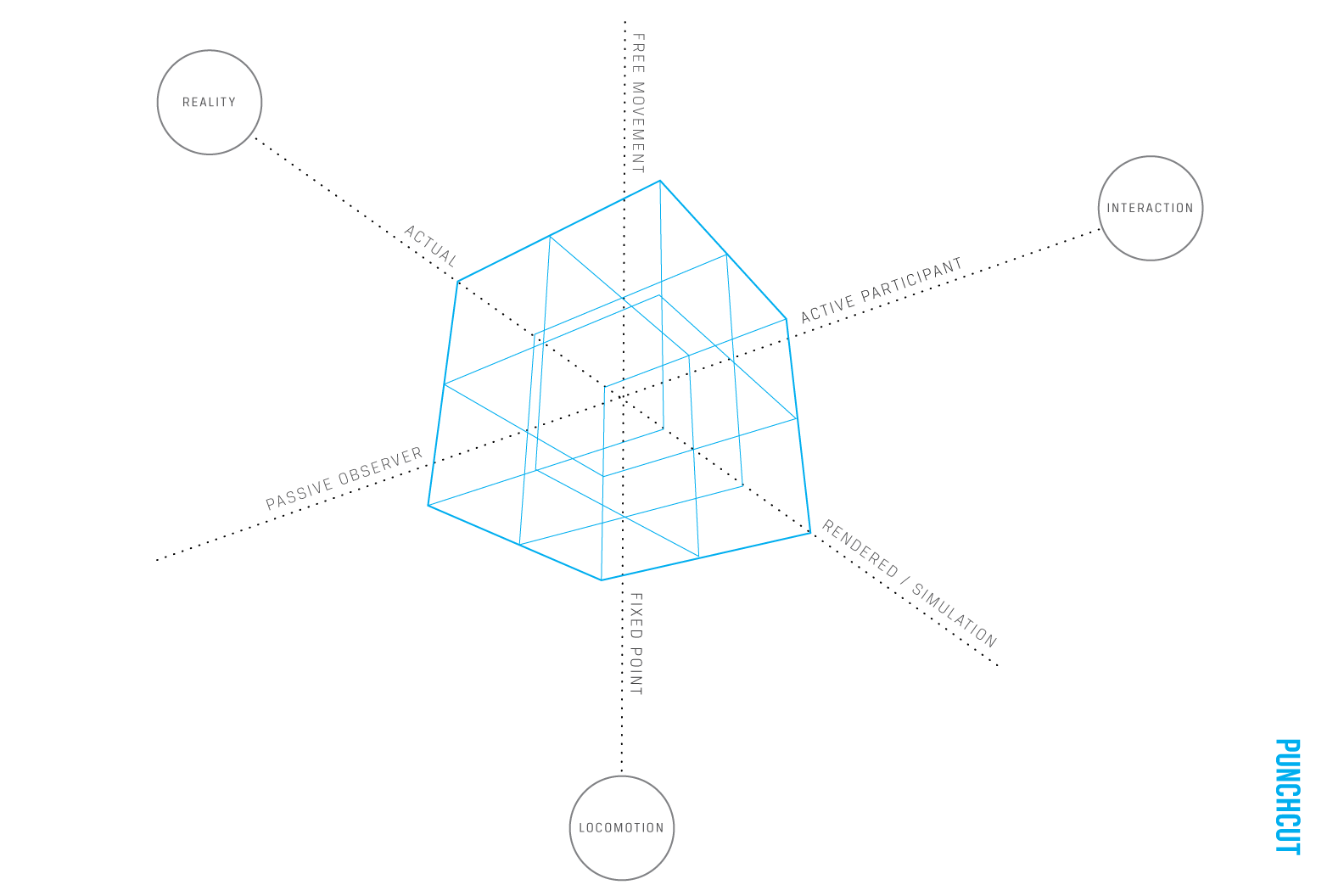

One experience framework that we’ve created allows any virtual or augmented reality experience to be plotted along three axes: Locomotion (Fixed Point vs Free Movement), Interactivity (Passive Observer vs Active Participant), and Reality (Actual vs Simulation). As a fourth axis, Time plays a key role in providing for changing environments across a narrative.

The Punchcut VR Experience Framework uses four axes for mapping any immersive experience: Locomotion, Interactivity, Reality and Time.

Any VR or AR experience can be mapped to this spatial framework. Some examples below:

Active Participant + Simulation + Free Movement = The Void

Passive Observer + Reality + Fixed Point = Remote Proxy 360º Camera

Active Participant + Reality + Fixed Point = Remote Surgery

Passive Observer + Simulation + Free Movement = Google Cardboard for Education

Immersive experiences exist across a wide spectrum of engagement levels, ranging from looking around a photosphere in Google Cardboard to roaming one of The Void’s entertainment centers. The number of senses employed in the experience will directly inform the level of immersion. An experience that requires the user to hold the lens to their eyes will never have the depth of immersion that comes from a free range of movement combined with a robust sensory experience.

An understanding of where the experience maps in the framework informs the level of immersion and can streamline the processes for designing worlds and levels of interaction. By understanding the appropriate level of immersion, designers can craft a user experience that either uses—or omits—the senses that will facilitate a user through the experience. It gives VR UX designers competitive and comparative experiences from which they can observe and learn, serving to validate their design decisions.

Appropriate interaction leads to engagement. For example, viewing a film in VR can be emotionally very powerful. Oculus Cinema on Samsung Gear VR gives the viewer the opportunity to transport themselves to a theatre on the moon. However adding levels of interactivity to such a “lean-back” or passive experience might negatively impact the experience. Conversely, a game with full-body motion and controls can be hindered by long cut scenes that strip away the user’s abilities and shifts their role from active participant to idle, passive observer.

Why watch movies in a earth-bound theater? You could do that in real life.

The user’s willingness to invest time or extend interest in an activity will also suggest target hardware and determine their level of immersion. Often the very foreign nature of VR setups serves to repel new users. If an experience is intended to attract users new to virtual reality, there may be some hesitation to commit one’s body to the head mounted display, headphones and dual controllers of HTC Vive. Peering through Google Cardboard may be an unintimidating first step and serve as a gateway to other, more robust experiences.

There will always be those who undoubtedly experience an amount of intimidation and a sense that VR “isn’t for me.” But if users are able to overcome this initial trepidation and mental barrier to entry, immersive experiences like the HTC Vive may be able to convert those who may not have otherwise been interested in the technology.

Ultimately, success for these virtual experiences will rely on appropriate levels of immersion and select the right tools to fit the user and content.

In the transition to designing for 3D/4D space, engaging a user’s full body senses becomes new territory for a user experience designer to develop their craft. To this end, the VR experience framework also peels back the layers of an experience and provides some guidance on engaging users through their senses at each layer.

Three Layers of Immersive Design

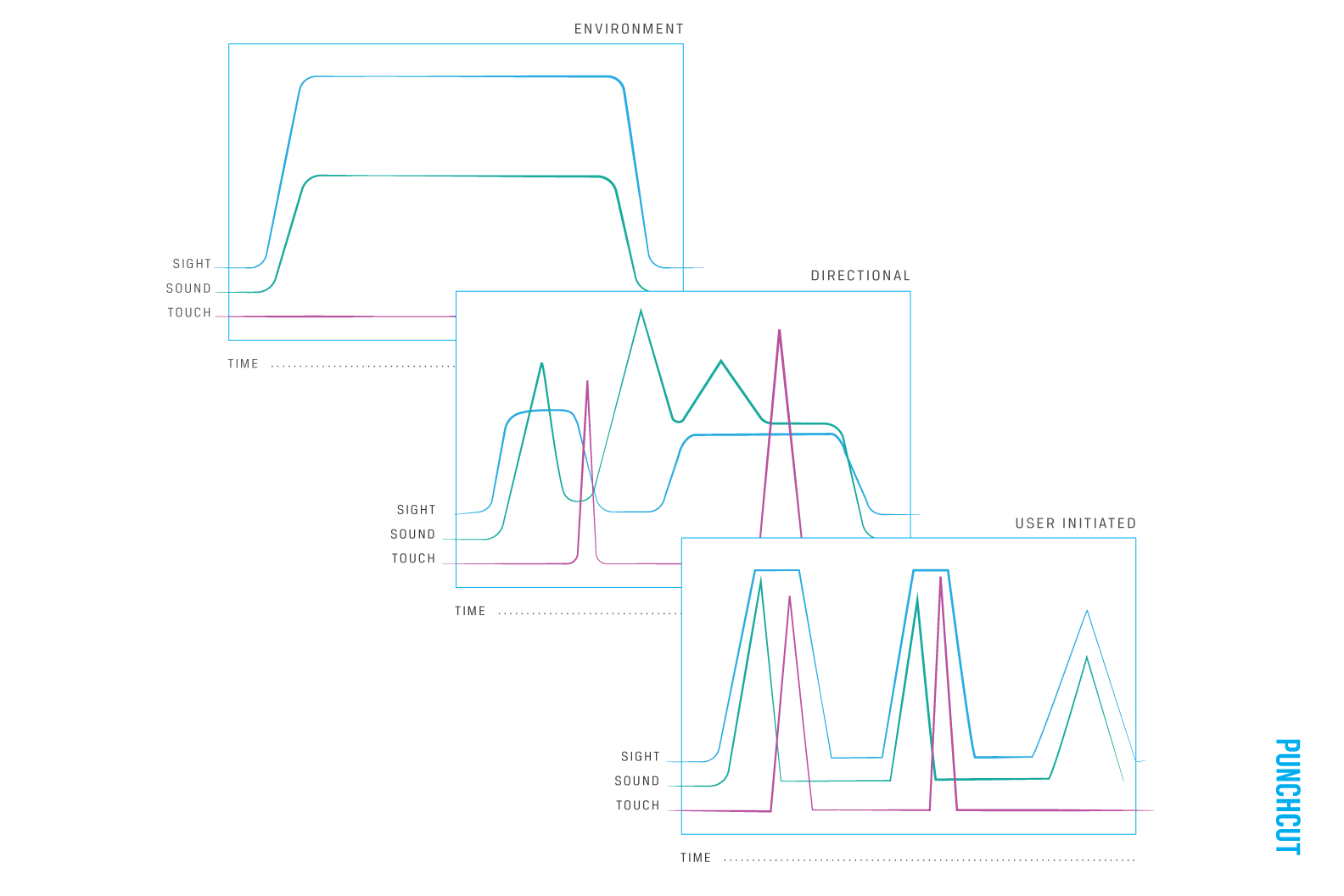

In building immersive spaces and environments, it’s helpful to think of it as designing three distinct layers: The world, directional cues, and user interactions. Orchestrating the user’s senses through visuals, holophonic sound and haptics at these three layers can move a user seamlessly through an experience with minimal onscreen UI.

Immersive experiences are not only multi sensory, but multilayered. The order and magnitude of visual, audio and haptic stimuli are important in creating believable experiences. Designing for virtual reality means designing three layers of sensory stimuli: the environment, directional cues and feedback to a user’s actions. An example of this is a dim room (environment), a lit rope in the corner (direction) and a trap door opening when the rope is pulled (user initiated). Coordinating the senses in these three layers creates a framework for understanding the design of any virtual experience.

Immersive experiences are not only multi sensory but multilayered.

Immersive experiences are not only multi sensory but multilayered.

Designing the Environment

Environmental stimuli create a world’s atmosphere and mood, as well as establish the user’s expectations for the experience. The environment also provides context for changes to a user’s location such as teleporting or scene changes. The world will blur forward with the user in a split second to let them know they have moved forward in space or may fade out to let the user know they are now in a new place or time.

Sight: High and steady throughout the experience

(Horizon, skybox, trees, scenery)

Sound: Mid level throughout experience with some variation

(Wind, birds, ambient music, background texture)

Touch: Haptics are typically absent at this level, but passively experienced in the physical world. (Temperature, physical orientation)

Directional Cues

Directional cues may be used to point a user towards an actionable item, a character, an area of interest or a decision point. These cues highlight overall experience objectives and, in narrative experiences, serve to usher the user through the story.

Sight: Variable but consistent input highlights what the user needs to focus on or act upon. (An open door, a highlighted object, a navigation dot)

Sound: Variable and directional sounds point the user to a character, events or items. (A character speaking, a beep of an incoming call)

Touch: Variable and uncommon haptic or rumble that alerts users to changes in the environment that affect the user’s abilities. (An earthquake, an unexpected scene change)

User Initiated Events and Feedback

Events and feedback orient a user to their abilities within the environment, and are the primary methods for indicating a user’s presence within a game.Multiple senses are often triggered in concert to inform the user of the physics of the world, the objectives of the experience and how to interact with the virtual space.

Sight, sound, and touch are variable and highly impactful when a user triggers abilities. Usually triggered together (ex. Teleport to another location, Fire a gun, open a door). This new frontier is as varied as the wild west with few guideposts and standards, and it’s an exciting time to be crafting immersive experiences for this emerging space. As the entry price for hardware drops and companies begin to expose larger audiences to immersive experiences, new people are being exposed every day. Engaging entertainment-based experiences serve well to address the already converted early adopters, but what about everyone else? We see an exciting opportunity to bring best practice UX thinking to virtual experiences as designers partner with content creators to craft the most intuitive and rewarding interactions for real people.

Next, we’ll share design insights to consider when designing VR experiences.

As specialists in UX for devices and content, Punchcut partners with companies to improve their virtual and augmented reality experiences through expert user experience design services focused on consumer research and testing, dimensional interface, and 360º user experience design and architecture.

A Punchcut Perspective

Contributors: Jared Benson, Ken Olewiler, Joy Wong Daniels, Vicky Knoop, Reggie Wirjadi